Picture this: a marketing manager develops a campaign strategy. It gets explained verbally to a content specialist, who turns it into a brief for an agency, which produces three concepts for the brand manager, who gives feedback to the agency, which delivers a final version to the campaign manager.

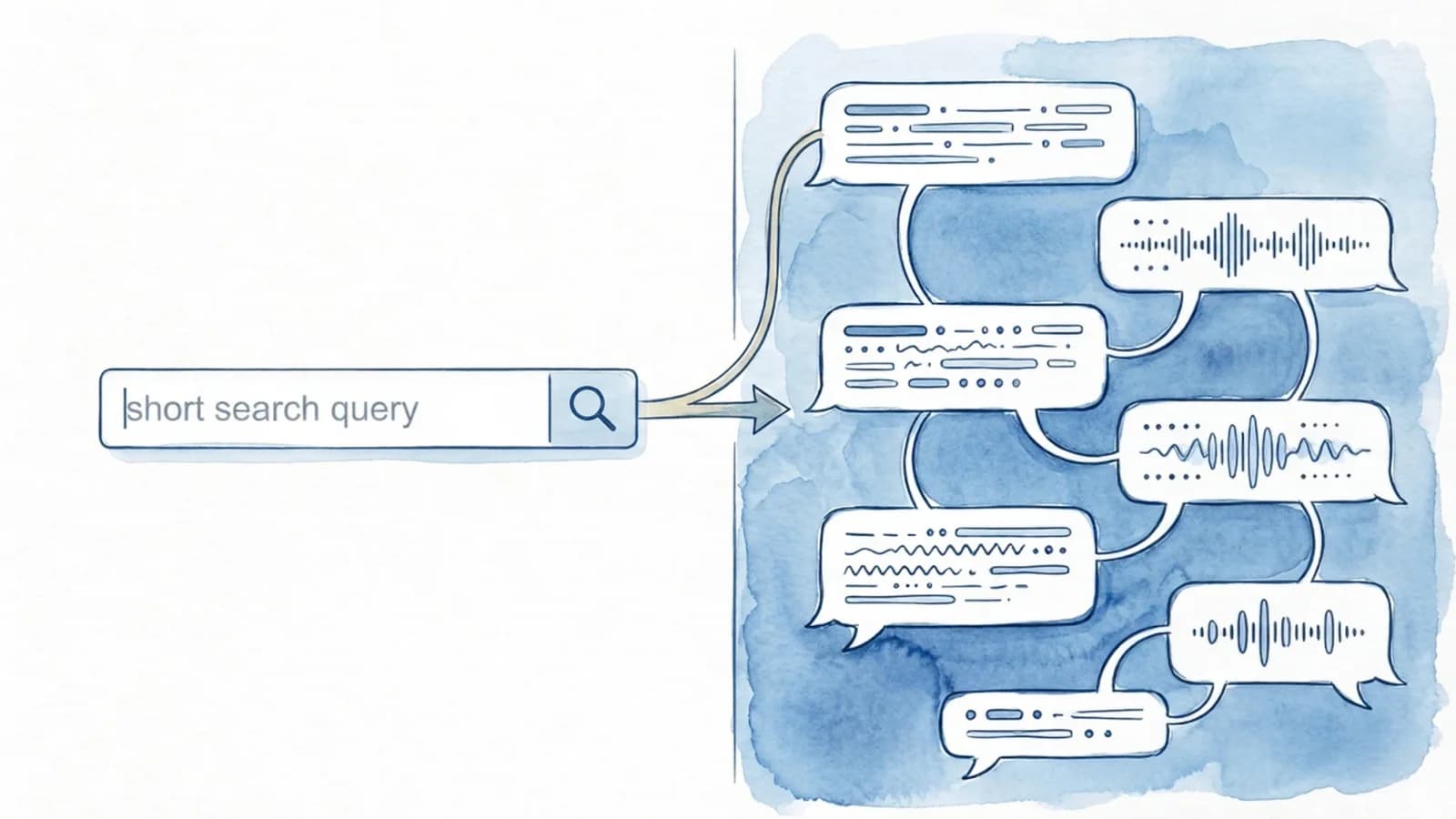

Sound familiar? Every step in this process is a handoff. And at every handoff, context gets lost. The nuance of the strategy evaporates in the brief. Feedback gets interpreted differently than intended. The final version drifts from the original idea. Not because anyone does poor work, but because information leaks at every handover to the next person.

Chris Geoghegan, VP of Product at Zapier, gave this phenomenon a fitting name at GitKon 2025: context bleeding. His observation: individual AI adoption delivers individual gains. But only when an entire team shares context — and uses AI to safeguard that context — does real transformation happen.

The default move for most teams is to deploy AI at the individual level: write faster, analyze faster, report faster. One person plus AI. That speeds things up, but it doesn't change how the team works.

The shift that's possible: deploy AI at the boundaries too. Not just with the content writer, but at the handoff from strategy to brief. Not just with the data analyst, but at the translation from data to action for the team. Not just with the campaign manager, but at the presentation of results to management.

AI at the boundaries means: making sure context doesn't leak when work passes from one person to the next.

What this looks like in practice

Let's get concrete. Here are three marketing scenarios where AI at the handoff makes the difference:

1. From strategy to brief A marketing director discusses the quarterly strategy in a meeting. Instead of someone taking notes that capture half of what was said, you let AI summarize the conversation into a structured brief: objectives, target audience, key messages, constraints, and — crucially — the open questions that still need answers. The content creator doesn't start with a half-baked note, dependent on whatever made it onto paper, but with a complete starting point.

2. From concept to feedback An agency delivers three campaign concepts. Instead of scattered emails saying "I think concept 2 is strongest, but can you adjust that one thing?" you use AI to structure the feedback: per concept the strengths, the required changes, the relationship to the original brief, and a clear prioritization. The agency doesn't get a puzzle to solve — it gets a workable assignment.

3. From results to reporting The campaign is over. The data analyst has the numbers. Instead of a spreadsheet open to interpretation, you let AI — using CSV exports from campaign managers, among other sources — translate the results into a narrative that connects back to the original objectives. What was the goal? What was achieved? What's the recommendation? Leadership doesn't have to guess what the numbers mean — they get it in plain language.

Which tools help with this?

It starts with capturing. You can't safeguard context if you don't capture it first. Fortunately, there's now a tool for almost every situation that does this automatically.

Built-in AI in your meeting platform. If you're already using Microsoft Teams or Google Meet, you have the fastest route. Microsoft Copilot automatically generates summaries, action items, and searchable transcripts from Teams meetings. Google Gemini does the same within Google Meet and saves transcripts as Google Docs you can share directly. Zoom has a similar AI Companion that generates summaries for paid accounts. The advantage: no extra tool, no bot joining your meeting, everything stays within your existing ecosystem.

Physical recording devices for in-person conversations. Not every handoff happens online. For face-to-face meetings, client visits, or brainstorm sessions, a device like the Plaud NotePin or Plaud Note Pro is worth considering. You clip it to your clothing or place it on the table, and it records, transcribes, and automatically generates a summary via AI. Plaud offers thousands of summary templates for different contexts.

The transcript is just the beginning. Capturing a transcript is step one. The real value comes from what you do with it.

The second mistake: expecting it to be perfect on the first try

There's another common mistake closely related to this. Teams try AI, get a first output, find it "decent but not good enough," and conclude that AI doesn't work for their context. You see this pattern everywhere: one team runs a successful experiment, but the rest of the organization does nothing with it — not because the technology fails, but because no one translates the approach to other handoffs. Teams get stuck in isolated pilots that don't scale.

That's like giving a new colleague a project on day one, reviewing the result, and deciding they're not the right fit.

AI output is a starting point. The teams that get the most out of it work iteratively:

Capture: Record the conversation, strategy, or input in a structured format. Not "write a brief" but "here's the context, here are the goals, turn it into a spec with the open questions."

Refine: Enrich the first version. Add missing context, process feedback, make technical or creative decisions. This is where human expertise makes the difference. (Even better if you add all relevant information to something like a project in Claude, so it always has the full context available.)

Decompose: Break the whole into workable units. Not one big brief, but specific assignments per person, per channel, per audience, per phase, etc.

Implement: Only then do you execute. With context intact, with clear scope, and with a human check as the final step.

This might sound like a waterfall model, but it's actually the opposite. Every step is a feedback loop. It's about iterating deliberately, not prompting once and hoping for the best.

What you can do tomorrow

Map out where handoffs occur in your marketing process. Chances are you'll find more than you expect: from strategy to planning, from planning to brief, from brief to creation, from creation to review, from review to publication, from publication to analysis, from analysis to optimization.

Pick one. The handoff that goes wrong most often. And experiment with AI not as a writing machine, but as a context guardian: a tool that ensures what one person means actually reaches the next person intact.

And if the first output isn't perfect? Good. That's the starting point. Iterate. Refine. Make it better, and experience AI as an accelerator of a deliberate process.

And honestly — I just had a meeting with Peter where I kicked myself afterwards for not having AI listen in or taking notes myself. So in a way, I'm writing this article as a lesson for myself too. Tomorrow I'll do better.